Artificial intelligence (AI) is poised to transform society in terms of work, economy, politics, and culture. As these changes seem inevitable, people become increasingly concerned over the ethics of AI, and more and more sociologists are studying the potential impacts of AI on society.

In this post, we examine how sociologists have examined Artificial Intelligence (AI) and how AI may impact sociology, with a focus on the field of Visual Sociology. I explore AI generated text-to-image pictures with examples from my work on political inequality and protest.

I argue that we can interpret society through these AI-generated images and that we can use AI images to illustrate sociological concepts. There are limitations but there is also great potential.

What is Artificial Intelligence (AI)?

Artificial Intelligence (AI) has many definitions, but in the main it is a computer program that has the ability to think, reason, and learn in a manner that humans deem as “intelligent.”

What is intelligence? Intelligence has many definitions. A dictionary definition states that it is “the ability to acquire and apply knowledge and skills.” If we delve deeper, we see that it can mean the capacity to learn from experience, recognize problems, and solve problems (Cherry 2022).

Summary

Artificial Intelligence (AI) would be able to learn from what it experiences, recognize emergent problems, and solve those problems.

Sociology and AI: Early Studies

Sociologists have thought about AI for decades.

The first major sociological essay is from 1985 (Woolgar’s “Why Not a Sociology of Machines?“) that wonders how AI machines can be social in the ways that humans are social. Beyond such metaphysics, Schwartz’s “Artificial Intelligence as a Sociological Phenomenon” (1989) argued what we now take for granted: namely, that humans design AI systems and feed them human-generated content.

As a consequence, humans filled AI with social biases.

Social Biases in AI

Recent studies have focused on social biases in AI, including research on inequalities and social change. Inequalities of AI are connected to the problem of social biases of algorithms. There are examples of biased algorithms that negatively impact health care delivery and crime prediction. According to a study by Miranda Bogen and colleagues, algorithms can introduce or amplify bias in hiring:

In a recent study we conducted together with colleagues from Northeastern University and USC, we found, among other things, that broadly targeted ads on Facebook for supermarket cashier positions were shown to an audience of 85% women, while jobs with taxi companies went to an audience that was approximately 75% black. This is a quintessential case of an algorithm reproducing bias from the real world, without human intervention.

Others have pointed to the positive potential of algorithms in that they can limit human biases and promote better diversity and inclusion, or can improve scientific journals’ peer review system. There are interesting sociological research uses, too. For example, the NoTap! Project, have used the power of AI to examine online protest.

AI Text-to-Image

AI can impact sociological knowledge through AI generated images. These AI generated images, e.g. text-to-image pictures, are increasingly popular. Companies such as Google Imagen, DALL-E, Midjourney, DeepAI, and Hugging Face and Stability AI, which both uses Stable Diffusion, have fueled its popularity.

America’s Got Talent in 2022 showcased an amazing example of AI generated images. The company Metaphysic generated a video hologram of a young Elvis singing and dancing onstage:

Metaphysic, an AI company, made it to the AGT 2022 finals. Metaphysic took 4th place. (The Mayyas, a Lebanese dance troupe, won AGT 2022)

What is Text-to-Image?

In Text-to-Image, humans input text into an AI program and the AI program produces an image that it interprets from that text. How does it do so? The AI interprets the human text based on millions of images scraped from the internet. This is also the basis of facial-recognition software.

The Futures of AI Text-to-Image

The potential for abuse is immediately apparent. Currently, AI companies such as DALL-E put in strict safeguards against sexual images and violence. But, as Metaphysic pointed out, Stable AI allows users to remove such safeguards from its own product:

In a revolutionary and bold move, the [Stable AI] model – which can create images on mid-range consumer video cards – was released with fully-trained weights, and the ability to easily remove the single line of code that prevents it creating pornographic or violent content.

The futures of AI text-to-image generated images are both bright and dark

The futures are bright with the possibilities of understanding society through AI, and dark with the potential for the mass proliferation of abusive images. Society, run by humans and aided by AI, will likely see both futures.

Sociology’s job is to understand those futures. One major field poised to do so is Visual Sociology.

What is Visual Sociology?

Visual Sociology may not have a strict definition, but the International Visual Sociology Association refers to it as “the visual study of society, culture, and social relationships.” The IVSA does not put limits on what a “visual” is. It includes photos, films, and other representations of society.

Visual Sociology and AI Generated Images

Since Visual Sociology is the only sociological field dedicated to using images to illustrate and interpret society, the field should embrace the study of AI text-to-image pictures. By the early 2000s, Visual Sociology looked to digital images from the internet, movies, and television. As of the 2020s, however, AI text-to-image generation has yet to make a dent in the field. Visual Studies, a journal of the field, still centers on photographs.

The bright and dark futures are coming. It will not be long before AI generated text-to-image pictures will flood the online world. The public — if not the art critics — will increasingly be unable to distinguish between what a human painted without the help of computer programs, and what an AI computer program painted.

A human did not paint the picture above. DALL-E created it. I helped the AI to create it by inputting the information, “Basquiat painting of money and voice.” Jean-Michel Basquiat was a Brooklyn-born artist of Haitian and Puerto Rican descent famous for his neo-expressionist paintings. I used it to illustrate an essay I wrote on political inequality.

DALL-E generated this one, too. I inputted the information, “Klimt painting of democracy and money” for Afouxenidis’ guest post on “Neoliberalism and Democracy.” Gustav Klimt was an Austrian artist of the late 19th and early 20th Centuries and a master of the Art Nouveau style.

I wanted eye-catching and non-copyrighted pictures for the essays on my website. I knew that the AI would attempt to create a “new” Basquiat and a “new” Klimpt, but I had no idea how the AI would interpret the terms “money and voice” or “democracy and money.”

My claim to the creativity of the art is based on my access to the software. Without my text input, the AI would not have generated that picture. But the AI painted a picture that I could never paint.

The AI & I co-created these digital paintings.

We can interpret and illustrate society through AI-generated images

We know that Artificial Intelligence is capable of generating images of society. AI generates these images based on the program that humans created and the millions of images that these humans fed into the program. As with other socially biased algorithms, AI text-to-image pictures are a window into a new technology and into the society that created it. As a bonus, sociologists can use AI images to illustrate sociological concepts.

Let’s conduct an AI Visual Sociology experiment

I conducted an experiment to understand the potential of interpreting society through AI-generated images, and using AI-generated images to depict sociological concepts. For this experiment, I used DALL-E, because it is, at the moment, the best free and easy-to-use AI text-to-image generator.

Here’s what I learned.

The AI works best when the text mentions a specific object, color, and style.

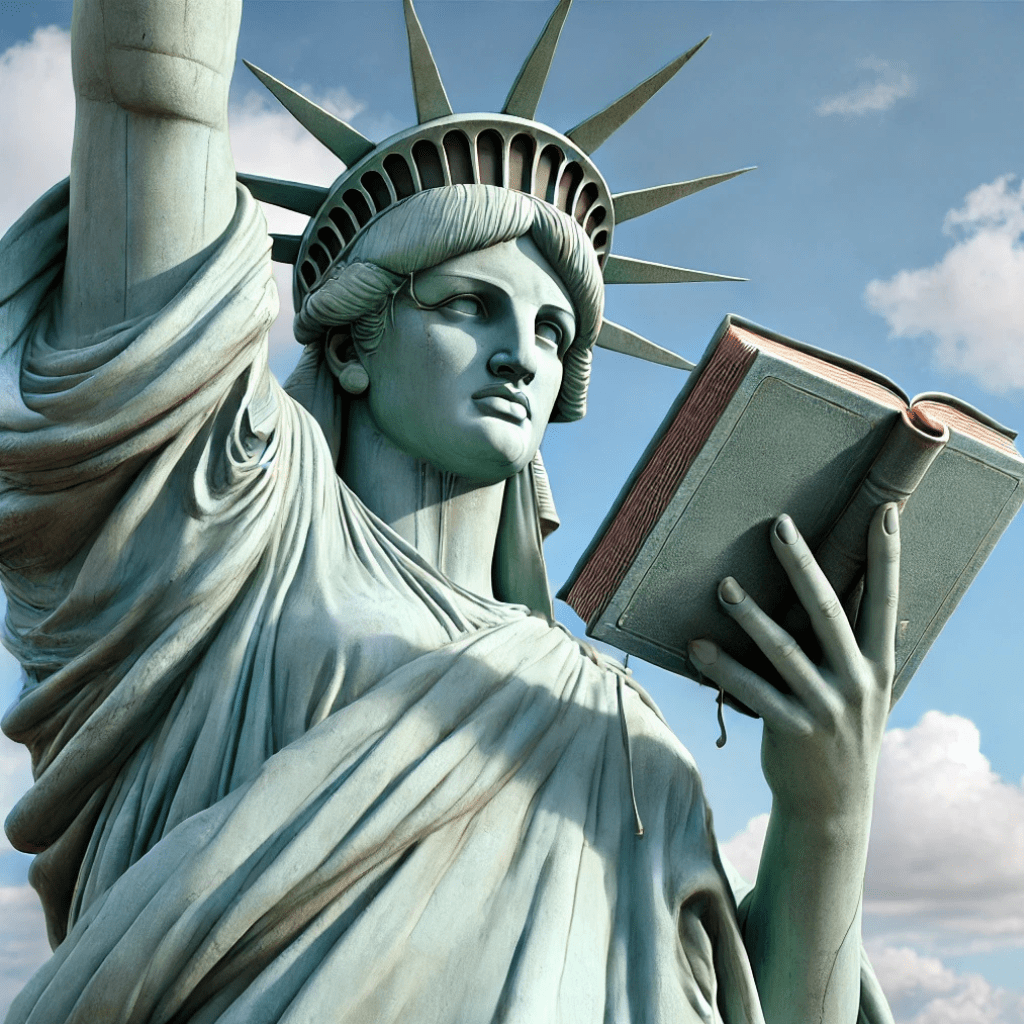

I wrote an essay on “The Many Definitions of Political Inequality.” I wanted a picture that illustrates politics, but in a scholarly way. An abstract “definitions of political inequality” would not work. So, to create the visual, I wrote into the program, “Photograph of the Statue of Liberty reading her book.” It came out well, though the distorted face is not ideal.

March 2025 Update

I tried this with DALL-E in March 2025, and got a somewhat better, also not quite good result:

The statue is in focus and the overall picture is clear, but it doesn’t look like she is actually reading the book. It looks like she is looking past the book, like someone who was reading and was interrupted, and is now annoyed.

AI struggles with abstract concepts

We cannot simply type in a single word for an abstract concept, like “neoliberalism,” and hope to get a worthwhile image. When I did so, I got unusable and unintentionally funny variations of this:

March 2025 Update

Same prompt for ChatGPT in March 2025 returned this image:

It’s still not good at words and well-known symbols, but it did a better job of interpreting the zeitgeist of neoliberalism with a globe, business people, and money symbols. it also focuses on North America and South America.

AI Images of Protest: It works better when the the text mentions a physical object

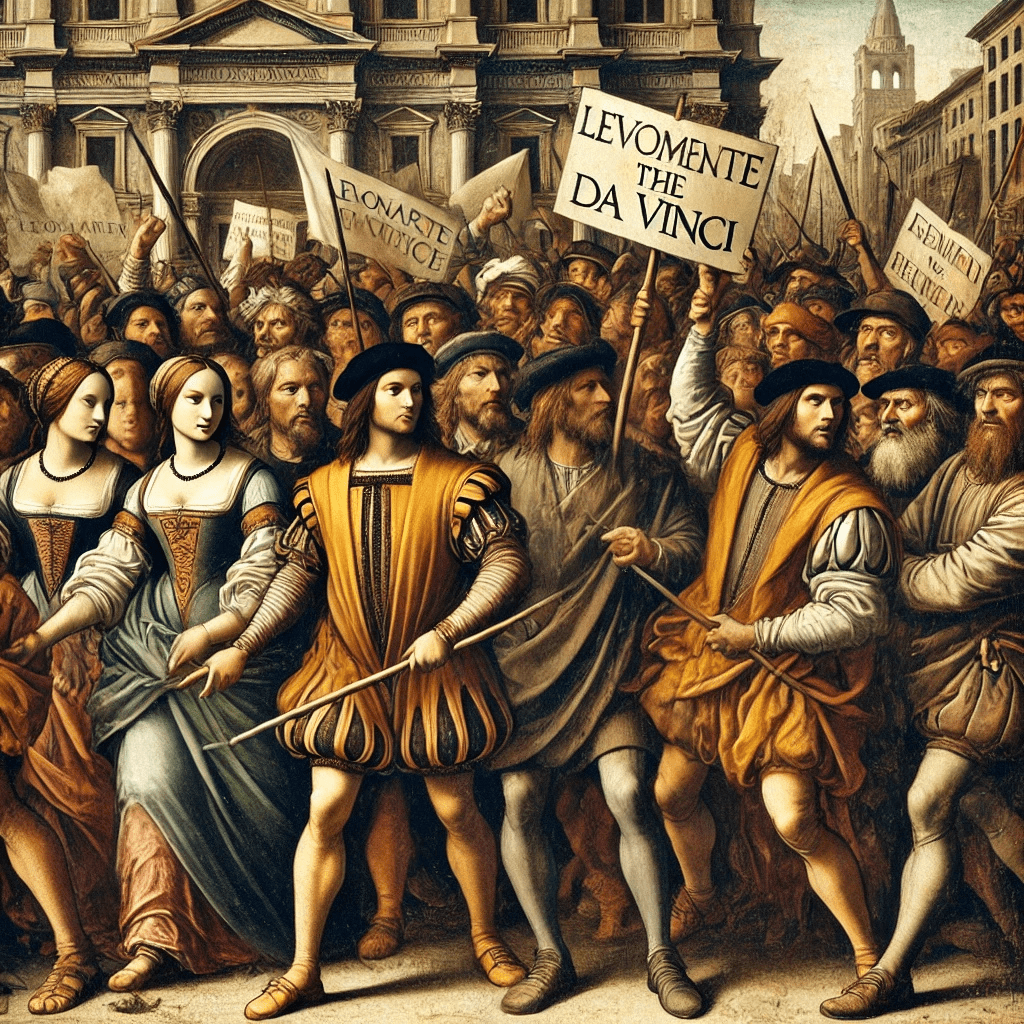

As I write many essays on political voice, I wanted many visuals of protest. Because I wanted images free from copyright, I turned to DALL-E. In 2023, I asked DALL-E for a “Leonardo da Vinci painting of people at a protest holding signs.” DALL-E provides four images. This is what the AI helped to create:

The gender representation surprised me. While the paintings are not clear, two of the protest paintings seem to be populated by women, though perhaps one can make an argument that they are gender neutral. I am reasonably certain that the AI had no idea that I have done extensive research in gender and politics. The result was good, though the faces at the bottom right quadrant are nightmarish.

ChatGPT has improved. Here is the same prompt for March 2025:

There are fewer women, but they are prominent at the front.

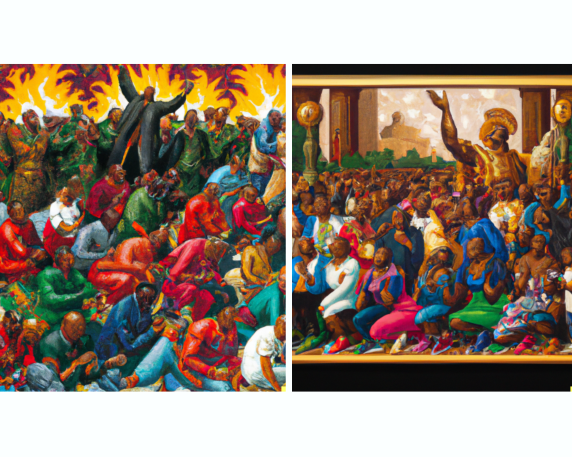

I have a difficult time interpreting some of the paintings. A good example of such is when I asked DALL-E, in 2023, for a “Kehinde Wiley painting of people protest.” Kehinde Wiley is an African American artist famous for his painting of President Obama. This is what I received:

I do not know how to interpret these paintings. We can look to what DALL-E fed the AI. Generally, the figures in Wiley’s paintings have a near-photorealistic quality. But these DALL-E paintings tend toward the expressionist. Wiley does paint African Americans, perhaps exclusively (I am not an expert in his work). He has painted African Americans in all white clothes and in armor of ancient times. But what these are, and how they are related to protest, I have no answer.

March 2025 Update

ChatGPT has since changed its copyright rules. When I asked for the same prompt in March 2025, it told me, “I wasn’t able to generate the image because it didn’t follow content policy guidelines. Let me know if you’d like a different approach, such as a painting inspired by Kehinde Wiley’s style without directly referencing his work.”

When I put in the prompt with “inspired by Kehinde Wiley,” it started to generate, then stopped, and told me: “I wasn’t able to generate the image because it didn’t follow content policy guidelines. If you’d like, I can create a painting with a vibrant, ornate background and realistic figures in a bold, heroic style, without directly referencing a specific artist.” Apparently, Da Vinci is outside of copyright law.

Conclusion: Limitations and Potential

The Artificial Intelligence text-to-image generator has clear limitations but great potential.

As we learned from the “neoliberalism” example, the AI cannot handle single words that stand for abstract concepts. From the da Vinci paintings, the AI seems to understand the concept of people at a protest holding signs. It worked because “holding a sign” is physical and therefore interpretable. From the Wiley paintings, we see that the AI may have a difficult time understanding what “people protesting” means. “People protesting” may be too abstract a concept. The AI may even have a tough time figuring out how to mimic famous painters.

The examples could continue. We could ask DALL-E for a Kehinde Wiley (or da Vinci or Klimt or Basquiat) painting of neoliberalism, but I doubt it would return much of value. Indeed, by 2025, ChatGPT/DALL-E refuses to generate pictures from modern artists. I encourage you to run your own experiments.

Through these examples, we learn that the AI has its own inscrutable interpretations of social phenomena. This is because the AI’s interpretations of human concepts are dependent on an algorithm and program built by DALL-E. And only DALL-E knows how the program works.

We also learned that we can use AI generated images to illustrate abstract sociological concepts. It is best if you ask the AI for something physical, with a definite style and color.

Is the AI Text-to-Image Generator “Intelligent”?

The AI showed flashes of intelligence, but calling it “AI” may be a bit much. The DALL-E AI learned from what it experienced, that is, the millions of images that DALL-E fed it. It struggled to recognize emergent problems that are abstract. It did try to solve those problems, with some good and some not-so-good results. I appreciate the AI’s effort.

This post explained the limitations and promise of using text-to-image for sociological research and teaching. I hope that Visual Sociology will embrace this emerging and increasingly popular technology and share what they learned.

Further Reading

From Sociology to Quantification: The Rise of Big Data and Computational Social Science

How Do Digital Technologies Impact Political Inequality?

- Artificial Intelligence (AI) and the Social Scientist

- How to use ChatGPT in Social Science Research

- Quantitative Research in the Social Sciences and ChatGPT

- ChatGPT Deep Research Output Misleads Us about Data Collection and Analysis

Joshua K. Dubrow is a PhD from The Ohio State University and a Professor of Sociology at the Polish Academy of Sciences.

Table of Contents

- What is Artificial Intelligence (AI)?

- Sociology and AI: Early Studies

- Social Biases in AI

- AI Text-to-Image

- The Futures of AI Text-to-Image

- What is Visual Sociology?

- Visual Sociology and AI Generated Images

- We can interpret and illustrate society through AI-generated images

- Let’s conduct an AI Visual Sociology experiment